You built the app with an AI tool. The output looks clean. The UI works. You uploaded the build, filled in the fields as best you could, and hit Submit.

Then Google Play came back with a policy violation.

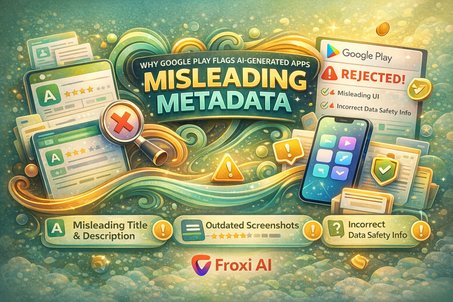

The rejection reason: misleading metadata.

This one catches founders off guard because the app isn't misleading. It does exactly what it's supposed to do. But Google's review system isn't evaluating your intentions — it's comparing your title, description, screenshots, and data safety answers against what the app actually does. And for AI-generated apps, that comparison often fails.

Here's why it happens, and how to fix it.

What Google Actually Means by Misleading Metadata

Misleading metadata doesn't mean you lied. It means something in your listing doesn't line up with the app's real behavior.

Google Play's review checks four things:

- Whether your app title and description match the features that exist in the build

- Whether your screenshots reflect the current UI — not a prototype or an earlier version

- Whether your Data Safety form accurately describes the data your app collects and shares

- Whether your permissions declaration matches the permissions actually requested in the code

When any of these four don't align, the submission gets flagged. For AI-generated apps, misalignment is unusually common — and it's almost always unintentional.

Why AI-Built Apps Are Especially Vulnerable

AI app builders generate code fast. That's the whole point. But the speed creates a specific kind of mismatch that shows up in Google Play review.

During development, the app changes. Features get added, renamed, or removed. A screen that existed in an early version disappears. A description written during the planning phase no longer matches the build. Permissions included in a template component stay in the manifest even after that component stops being used.

None of this is the developer's fault. It's just the natural result of building quickly. The problem is that Google Play's reviewers don't know your development history. They see the listing and the build, and they compare them.

The Four Patterns That Trigger This Rejection

1. Permissions That Outlived Their Purpose

AI builders often start from templates. Those templates include a standard set of permissions. As you customize the app, some of those permissions become unnecessary — but they stay in the manifest because removing them requires editing code directly.

Google Play sees a permission in your code. It doesn't see that feature anywhere in your description or Data Safety form. That's a flag.

The fix: audit your permissions before submission. Every permission in the manifest needs a clear, specific justification in your Data Safety form. If you can't write that justification honestly, remove the permission.

2. Screenshots That Show a Different App

This is the most common version of the problem. You took screenshots early, when the app looked one way. By the time you submitted, the UI had changed — different layout, different copy, different flow.

A reviewer opens your listing, sees one app, then opens your build and sees another. That inconsistency is enough for a rejection, even if both versions of the app are perfectly functional.

The fix: take final screenshots after the build is locked. Not before. Treat the store listing as the last step, not the first.

3. Data Safety Answers That Don't Reflect Real Data Flows

The Data Safety form asks what data your app collects, why, and whether it's shared with third parties. Many founders fill this out based on what they intended the app to do — not what it actually does.

If your app uses Firebase for authentication, that means you're collecting device identifiers and email addresses. If it uses an AI API to generate content, that API call may be logging query data. If it uses an analytics SDK, that SDK is collecting usage data.

All of that needs to be declared. When it isn't, review finds the discrepancy.

4. Title and Description That Describe a Plan, Not a Product

AI-assisted description writing tends to be optimistic. It describes what the app could do at full scale, what you're planning to add next quarter, or what the ideal version of the experience looks like.

Google Play reviewers test what's in the build right now. If your description mentions a feature that isn't there, or frames the app as something more comprehensive than the actual MVP, that's misleading metadata.

The fix: write your description after the build is final, not before.

How to Audit Your Listing Before Submission

Before you submit to Google Play, run through this check:

| Check | How to Verify |

|---|---|

| Every permission has a declared justification | Open your manifest, list every permission, write one sentence explaining why each one exists |

| Screenshots match the current build | Take new screenshots the day you submit — never reuse older ones |

| Data Safety form reflects actual SDKs | List every third-party library you use and check what data each one collects |

| Description only describes what exists now | Read each sentence and ask: can a reviewer test this today? |

| Title doesn't overclaim | Remove words like 'ultimate', 'complete', or 'all-in-one' unless you can prove them |

| Support and privacy policy links are live | Open them in an incognito window — make sure they load and cover mobile data |

How Froxi AI Helps You Catch This Before Google Does

Froxi AI asks you to describe what your app actually does — not what you planned, not what you hope to add. It uses that description to generate your store listing and flag gaps between your answers and what your build reveals.

When you declare your permissions through Froxi AI's guided flow, it prompts you to justify each one in plain language. That exercise alone catches most Data Safety form problems before submission.

If you get a misleading metadata rejection, the Rejection Resolver reads the exact Google message and gives you a specific list of what to fix — not general advice, but the exact fields, the exact discrepancies, and the exact changes needed to resubmit cleanly.

The Simple Rule

Your listing should describe the app that exists in the build you're submitting — not the app you're building toward.

Write the description last. Take the screenshots last. Fill the Data Safety form last. When the listing describes what a reviewer can actually test, this rejection stops happening.